Key Takeaways — brief reading, less than 30 seconds

- Watermarks are about provenance, not piracy. The audience is the well-meaning forwarder who needs to know where the asset came from, not the professional thief with an AI removal tool.

- Three industry modes plus one hybrid: Visible (default for drafts), Invisible/steganographic (provenance at scale), Per-recipient (leak tracing in regulated industries), and Scope-aware (members get the original, external viewers get the watermarked copy — what most teams need).

- Four media types, four mechanics: image (libvips composite), video (ffmpeg overlay + segment-level HLS coverage), audio (voice tag at intervals or high-frequency tone), PDF (durable stamp into the object stream, not viewer overlay).

- Watermark drafts, in-review cuts, brand assets sent externally. Skip internal working files and post-handover deliverables. Watermarking everything is a way of watermarking nothing.

- Survival tactics: diagonal across the centre, tiled patterns, marks adjacent to critical content, lower-third + corner for video, voice tag short enough to land in any 30-second clip.

- The workflow pattern: watermarks generated at share time (master stays clean), enforced server-side (members vs external), toggled per media type, removed at contractual handover.

- The honest limit: AI removal exists, invisible marks die under compression, per-recipient costs rise with scale. Watermarks are a deterrent and a provenance trail, not a security control. When they aren’t enough, the next layer is legal.

Editor's note: YetOnePro applies visible watermarks across image, video, audio, and PDF for portal and share delivery, driven by per-workspace and per-surface rules. Workspace members always receive originals; external delivery is watermarked when a rule fires — and falls back to the original while a backfill is in progress, or when an admin has explicitly opted a portal out. This guide is tool-agnostic; the principles apply whether you use a DAM, a review tool, or a custom export pipeline.

Watermarks don’t stop theft. AI tools strip the visible ones in seconds and crush the invisible ones under any decent re-encode; vendors who sell “tamper-proof” marks are selling theatre. What watermarks actually do is travel as a label — a “FOR REVIEW” bug in the corner of a draft tells the marketing director who’s two forwards into a thread, opening it on her phone between meetings, that she isn’t looking at the approved version. The rest of this guide is what follows from that: which mode, which media type, what survives a crop, and the threshold past which the next layer of protection is a contract, not a logo.

Watermarks don’t stop theft.

Why Watermark at All#

The reason is provenance, not piracy deterrence. When a draft moves through three hands without context, the watermark is the only thing that travels with it.

Three concrete cases where the watermark earns its keep:

- Stage signalling. A draft watermarked “FOR REVIEW” arrives in a forwarded email and the recipient knows immediately it isn’t the final. The marketing director who saw the draft on a Slack screenshot doesn’t mistake it for the campaign asset.

- Source attribution. When the asset surfaces at a partner’s agency three months later, the watermark answers “is this ours or did it come from somewhere else?” without anyone having to email the original sender.

- Casual-use deterrence. A reviewer who screenshots a draft to send to a colleague pauses when they see the watermark. The professional with an AI removal tool doesn’t. The first behaviour is what’s worth interrupting.

Watermarks belong at the “files we trust people with” layer of asset distribution — the layer that how to share files with clients covers more broadly. They don’t replace access controls; they sit on top of them. Both layers do different work.

Visible, Invisible, and Scope-Aware#

Industry vocabulary has three modes, plus a hybrid that some platforms ship. The mode you pick depends on the threat model.

Visible. Diagonal text or a logo across the asset, semi-transparent, signalling “draft” or “for review only.” Survives screenshots. The default everyone should ship at the “in-progress sharing” layer.

Invisible (steganographic). Encoded into the pixel data or audio waveform, undetectable to the eye or ear. Survives some crops and re-exports but degrades sharply under heavy re-encoding — forensic-watermarking benchmarks put the survival floor near JPEG quality 60(opens in new tab), and Instagram, Facebook, and WhatsApp routinely transcode below that. Useful for provenance tracing at scale; not a substitute for visible markings on drafts.

Per-recipient. The same asset rendered with the recipient’s name or email embedded in the watermark itself. Used for leak tracing in regulated industries: when an asset surfaces in the wild, the watermark identifies which recipient’s copy was shared. Operationally expensive (one watermarked render per share) and carries privacy concerns about embedding personal data in distributed files.

Scope-aware. The same asset, two versions: workspace members see the original, external portal and share viewers see the watermarked copy. Cleaner than per-recipient because there’s no privacy concern about embedding emails in shared files. Cheaper to compute because there’s one watermarked version per asset rather than N. Doesn’t do leak-tracing the way per-recipient does, but covers the “internal team gets the original; external eyes get the watermark” case — the common one for teams that share with clients and agencies.

Pick the mode that matches the threat model. Scope-aware is right for “we’re sending drafts to agencies and clients.” Per-recipient is right for “we suspect a known leak source.” Invisible is right for “we ship at scale and care about provenance after the fact.” Visible is the default everyone should ship by default.

Watermarking by Media Type: Image, Video, Audio, PDF#

Most watermarking guides stop at images. Images, video, audio, and PDF each have different mechanics and different failure modes.

Image#

The well-trodden case. Composite a logo or text overlay onto the image at a configured position, opacity, and rotation. Tooling: libvips for batch processing, ffmpeg in image mode, Photoshop for manual one-offs. What survives 80% JPEG compression: most diagonal-pattern visible watermarks. What dies under 50% JPEG compression: invisible steganographic data, fine-detail visible marks at low contrast. The composite step itself runs in well under 10ms per image — PyVips benchmarks 100,000 watermark composites in roughly eight seconds, about 10x faster than Pillow(opens in new tab); full decode-composite-encode at web resolution adds a couple hundred milliseconds depending on file size.

Video#

Harder. ffmpeg overlay filters (with scale2ref to keep aspect ratios sane) handle the basics. The complication is HLS adaptive streaming: a watermarked stream is composed of segments, and rip-tools can splice un-watermarked segments back together if the watermark only covers a known fraction of timeline — Synamedia names stream splicing alongside dropped segments and jitter as standard pirate tactics(opens in new tab), and Irdeto adds collusion attacks that blend multiple watermarked copies to weaken attribution(opens in new tab). The pattern that works: segment-level watermarking with deterministic 40% coverage per asset, seeded by the asset ID so the pattern is unique to each video but stable across re-encodes. Enough to defeat trivial splicing, light enough to keep encoding fast. For visible video watermarks, a lower-third “FOR REVIEW” bug plus a corner mark gives redundancy — a single-region watermark gets cropped out the moment someone records the screen.

Audio#

The strangest case. Audio doesn’t support a corner overlay; the watermark has to be audible. Two approaches: a voice tag injected at intervals (“property of [brand], for review only” spoken over a momentary volume dip on the original audio every 60 seconds) or an inaudible high-frequency tone embedded as a steganographic marker. The voice tag is conspicuous but obvious; the high-frequency tone is invisible to listeners but dies under any decent re-encode. For pre-release music or podcast drafts shared with reviewers, the voice tag is the workable answer. The TTS-generated voice (“property of [brand]”) is what most modern DAMs ship; uploaded voice recordings are an option for teams that want a specific brand voice.

PDF#

Two approaches with very different durability. The naive approach overlays the watermark in the viewer — works for view-only links, fails the moment someone downloads the PDF and opens it in a different viewer. The durable approach stamps the watermark into the PDF object stream itself, page by page, so the mark is part of the file and survives any viewer. Microsoft 365 sensitivity-label content markings, when applied via the Office desktop apps, are stamped “directly into the content and remain visible until somebody modifies or deletes them”(opens in new tab), and PDFs created from those documents inherit the marking — an example of the durable approach in the enterprise document space. (Microsoft’s separate “dynamic watermarks” feature is viewer-only and does not survive export.) For pharma MLR drafts and any document that ships outside the original tool, durable PDF watermarking is the only option that survives.

Across all four media types, “all sizes get watermarked” is the rule that prevents the most common failure: a 200×200 thumbnail without a watermark gets hotlinked into a deck and becomes the face of the asset. If the system only watermarks the main resolution and leaves thumbnails clean, the thumbnail is the leak channel.

What to Watermark (and What to Skip)#

Watermarking everything is a way of watermarking nothing — when every asset says “PROOF”, the team stops reading. The discipline is being explicit about which assets earn the watermark and which don’t.

Always watermark:

- Drafts shared with clients before approval

- In-review video cuts and audio mixes

- Unreleased campaign assets sent to agencies for production work

- Brand assets sent to external partners (agencies, freelancers, vendors). The brand-management piece covers what counts as a brand asset; this is the layer that protects the asset in transit.

- Anything in an approval round before the final sign-off — design approval process covers the workflow these drafts move through.

Sometimes watermark:

- Final deliverables to clients during a contractual review window. The watermark stays on for the proof period and comes off at delivery.

- Stock-licensed previews shared with stakeholders before purchase commitment.

Never watermark:

- Assets the client paid for and now owns — watermarking after handover is a contract problem, not a copyright one. Standard commercial practice is to strip the watermark when the deliverable ships.

- Internal working files where the team is working on their own assets.

- Anything past the contractual handover. Once the asset is delivered, the watermark comes off.

The over-watermarker’s mistake is treating watermarks as a reflexive insurance policy rather than a stage signal. If every asset in the team’s library carries a “CONFIDENTIAL” watermark, recipients learn to ignore it — and the actually-confidential ones lose the signal that distinguishes them.

Will the Watermark Survive the Crop?#

Most watermarks lose to a 90-second crop tool. Design rules that survive the crop:

- Diagonal text crossing the asset’s centre. Cropping the corners doesn’t remove the mark because part of the diagonal sits in the middle of the frame. The single most-effective pattern for visible image watermarks.

- Repeating tiled pattern. One tile is always inside any reasonable crop. Less aesthetically clean than a single diagonal but harder to remove without ruining the image.

- Embedded near critical content. Place the watermark adjacent to the product, the face, or the headline — anything where cropping the watermark out also crops out the part that makes the asset useful.

- For video: lower-third + corner mark, both. A single-region watermark gets cropped or recorded out. Two regions in different parts of the frame force the recorder to give up on cropping.

- For audio: voice tag at intervals short enough that any 30-second clip contains one. A 60-second tag interval means a 30-second clip might miss it. Drop to 30–45 seconds for high-stakes assets.

Compression and resize behaviour varies by format. Lossy formats degrade invisible watermarks far more than visible ones; image file formats covers which formats compress how. The takeaway for watermark survival: a visible diagonal mark on a JPEG at 80% quality looks fine and survives most consumer edits; an invisible mark on the same JPEG at 50% quality is gone before it leaves the encoder.

The Watermark Workflow in a DAM#

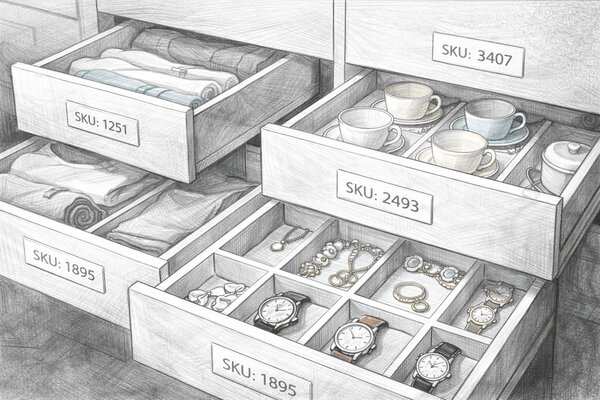

Watermarks generated manually per-asset don’t scale past a small team. The pattern that works is automatic and lifecycle-aware: the DAM generates the mark at share time, not at upload.

- Generated at share time, not at upload. The master file in the DAM stays clean. The watermarked version is rendered when an external share or portal is created. Re-rendering means the watermark can reflect the recipient (for per-recipient mode), the share configuration (for branded portals), or the workspace’s current settings (for scope-aware mode).

- Scope-enforced server-side. Workspace members never see the watermarked version; external viewers never see the original. A naive “show watermarked thumbnail in the portal” approach gets bypassed by anyone who opens the original asset URL. The enforcement has to be at the asset-resolution layer, not at the rendering layer.

- Toggled per workspace per media type. One logo for image watermarks, a different one for video, an audio voice tag for audio — each configured independently because they’re different artefacts with different appropriate marks.

- Removed at the contractual handover. The DAM tracks asset state; when an asset moves from “in review” to “delivered”, the watermark comes off in the same workflow event. Photo studios run this exact pattern: watermarked proofs through the review cycle, clean deliverables after payment.

The integration question matters too. Watermarks generated in the DAM but accessed via a separate review tool (Frame.io, Wipster) end up applied inconsistently — assets routed through one path are watermarked, the other path isn’t. The DAM either handles the watermark for every external surface or accepts that the watermark is only as reliable as the most-permissive surface.

Where to Find Watermarking#

Two markets, almost no overlap. The first is DAMs and dedicated tools that ship visible watermarking as a checkbox feature — right for the “mark drafts before they leave the team” case the rest of this article describes. The second is specialised forensic-watermarking vendors that sell per-recipient or per-session marks to studios, broadcasters, and image agencies. Different price brackets, different audiences, different problems.

Visible Watermarking (DAMs and Bulk Tools)#

Most of the names below ship visible watermarking as part of a broader DAM offering: configure a mark, scope it to portal/share viewers or anonymous downloads, and the rendered version is what external eyes see. Pricing is enterprise / quote unless noted.

Enterprise DAMs with Built-in Watermarking

- Bynder(opens in new tab) — enterprise / quote. Watermarked derivatives applied automatically to portal and API downloads, gated by Advanced Rights so only approved users pull a clean copy.

- Brandfolder (Smartsheet)(opens in new tab) — enterprise / quote. Brandfolder-wide and asset-level watermarking on JPG/PNG/MP4/MOV previews and public links; trusted users still download clean originals.

- Frontify(opens in new tab) — enterprise / quote. Brand-portal-led DAM that supports visible watermarks plus per-recipient invisible marks for tracing pre-launch creative leaks.

- Canto(opens in new tab) — enterprise / quote. Image and text watermarks configurable per portal, applied to previews and downloads for anonymous users while admins access originals.

- MediaValet(opens in new tab) — enterprise / quote. Visible watermarks plus a Steg.AI-powered forensic watermark integration that survives screenshots and per-frame video extraction.

- PhotoShelter for Brands(opens in new tab) — enterprise / quote (Brands plan); photographer plans from ~$15/mo. Photo-first DAM/portal used by sports teams, universities, and newsrooms; watermarks JPEG downloads from Lumen portals using a custom PNG.

Dedicated Bulk Watermark Tools

- Watermarkly(opens in new tab) — $19.95/yr or $39.95 lifetime. Browser-based bulk watermarking for photos, PDFs, and video; auto-scales the mark per image orientation.

- Visual Watermark(opens in new tab) — one-time licence (Basic/Plus/Premium tiers; Basic restricts commercial use). Desktop and web app for batch image, video, and PDF watermarking.

- iWatermark Pro(opens in new tab) — ~$20 one-time desktop, $3.99 mobile. Long-running Mac/Windows/iOS/Android app from Plum Amazing aimed at individual photographers and small studios.

Forensic / Per-Recipient Watermarking (Specialised Vendors)#

Different market entirely. These vendors sell to Hollywood studios, OTT platforms, sports broadcasters, and image agencies that need to identify which subscriber or pre-release reviewer leaked a file. All enterprise / quote pricing — if there’s a public price tag, it’s not the right vendor for the use case.

Video / OTT Forensic Watermarking

- NAGRA NexGuard(opens in new tab) — client- and server-side watermarking for pre-release and live OTT; deployed by major Hollywood studios and AWS MediaPackage customers.

- Friend MTS (ASiD)(opens in new tab) — subscriber-level forensic watermarking (embedded, client-composited, or edge-switched A/B) bundled with content monitoring; used by sports broadcasters and OTT operators.

- Verimatrix(opens in new tab) — imperceptible client- and server-side session-based watermarking for OTT video (HTML5 included); part of the broader Verimatrix anti-piracy suite.

- Irdeto TraceMark(opens in new tab) — cloud-based forensic watermarking for live and VOD; integrated with Ateme and used by DAZN to trace pirated streams.

- ContentArmor (Synamedia)(opens in new tab) — bitstream video watermarking that embeds a unique mark without re-encoding, with cloud detection via REST API; Hollywood-approved for dailies, screeners, premium VOD, and live sports.

Invisible Image and Audio Watermarking

- Imatag(opens in new tab) — enterprise / quote. Patented pixel-level invisible watermarking that survives compression, cropping, and screenshots; adopted by image agencies including dpa Picture Alliance.

- Digimarc(opens in new tab) — enterprise / quote. Veteran invisible-watermark vendor for images and audio; Digimarc Recover ties watermarks to C2PA Content Credentials so provenance survives metadata stripping.

Provenance Standard (Adjacent)

- Content Credentials (C2PA)(opens in new tab) — free open spec; Adobe enterprise tooling via GenStudio / Firefly Services. Cryptographically signed metadata co-founded by Adobe, Microsoft, BBC, Sony; tamper-evident and re-attachable via paired invisible watermarks if stripped.

Where Watermarks Don’t Work (the Honest Limitations)#

Watermarks are useful at one specific layer of asset protection. Treating them as a security control is a category error that creates a false sense of safety.

AI-driven removal exists. Tools that take a watermarked image and reconstruct the original are now generally available; they improve monthly. TechCrunch documented Google’s Gemini 2.0 Flash stripping watermarks from stock photos in March 2025(opens in new tab); the underlying reconstruction attack was published years earlier in Google’s own 2017 CVPR research(opens in new tab), which warned that “much of the world’s stock imagery is currently susceptible to this circumvention.” A determined attacker with a free Gemini account or a roughly $25-per-month subscription to a removal tool strips most visible watermarks in seconds and the result usually looks clean. Vendors that market “unremovable” watermarks are using the word marketing-aspirationally rather than technically — the test of unremovability is whether a current AI tool can remove it, and the answer is almost always yes.

Invisible watermarks degrade sharply under heavy re-encoding. Forensic-watermarking benchmarks put the survival floor near JPEG quality 60, and Instagram, Facebook, and WhatsApp routinely transcode below that — the steganographic payload is gone before the post lands. Newer neural watermarks have improved against screen-cam and rebroadcast scenarios; classical steganographic schemes have not. Either way, the practical envelope is “the asset went somewhere it shouldn’t have but I have the original-quality file to compare against,” not “a low-quality phone photo of the gallery wall.”

Per-recipient watermarks have process costs. They embed personal data (names, emails) in every shared asset, which raises privacy and GDPR questions. They require a re-render per share, which is operationally expensive at scale. They produce thousands of nearly-identical assets that have to be tracked individually for the leak-trace use case to work. The benefit is real for high-stakes regulated industries; for most teams it’s overkill.

Watermarks add friction for the casual reuser. The determined thief is a different problem. If someone wants to steal your work badly enough, the watermark extends the time they need by maybe ten minutes. AI removal collapses the cost of the determined-thief case to almost zero. The casual case — the well-meaning forward, the screenshot from a meeting, the partner who didn’t realise the file wasn’t for distribution — is where watermarks actually do work.

When watermarks aren’t enough, the next layer is legal. Licence management, takedown notices, contractual clauses, copyright registration. Watermarks are a deterrent and a provenance trail; they are not a security control or a property right. The legal-protection layer — registered copyright, licence terms, DMCA §512 takedowns(opens in new tab), and §1202 CMI claims(opens in new tab) when a watermark is stripped to enable infringement — is what picks up where watermarking ends. The AI image tagging piece is a reminder: the same model that’s good at categorising images is good at removing watermarks from them. Different prompt, same capability.

When to Reach for a Watermark#

The job watermarks do well is small and specific: signal which stage an asset is at, and label which team it came from, in a way that survives a casual screenshot or a forward through three inboxes. Done well, the recipient who opens the file knows what they’re looking at without having to ask. Done badly — CONFIDENTIAL on every internal file, no scope distinction, no removal at handover — the watermark becomes noise the team learns to ignore. Watermarks are not theft prevention. They are not a property right. They sit on top of access control and below the legal layer; they protect nothing on their own, and that is fine, because the access and legal layers are doing different work.

The next move is small. Audit the outbound paths where assets actually leave the team — review portals, client share links, agency handoffs, partner downloads, the email attachment someone is about to send right now. For each path, decide whether the recipient should see the original or a watermarked copy, and configure the system to ship the right version automatically. Watermarking by hand on a per-asset basis works at the scale of one client; configured-once at the scope-aware layer of a DAM is what scales past that.